Lab 9: Interactions between continuous variables

PSYC 7804 - Regression with Lab

Spring 2025

Today’s Packages and Data 🤗

Why Interactions?

The idea of interaction is that the effect two predictors \(X_1\) and \(X_2\) have on an outcome \(Y\) is fundamentally tied together.

- More specifically, the effect that \(X_1\) has on \(Y\) depends on the value of \(X_2\), and the effect that \(X_2\) has on \(Y\) depends on the value of \(X_1\).

A very clear example of an interaction effect is how an individual’s gender and race jointly affect number of experienced daily microagressions. Looking at gender and race separately would miss much of the picture (intersectionality!)

If we believe that two variables should interact in how they influence \(Y\), we can express that with the regression model:

\[ \hat{Y} = b_0 + b_1X_1 + b_2X_2 + b_3(X_1 \times X_2) \]

By the way, Interaction or Moderation?

I prefer the term interaction to avoid confusion with the term mediation, which has absolutely nothing to do with anything discussed in this Lab. That being said …

When hypothesizing interaction effects, it is useful to make a distinction between a focal predictor and a moderator.

Our hypothesis

final, \(Y\)) is predicted by how many classes a student attends (attend, \(X_1\)). Additionally, we also think that the effect that attend has on final is moderated by prior GPA before starting the class (priGPA, \(X_2\)).

Call:

lm(formula = final ~ attend * priGPA, data = grade_fin)

Residuals:

Min 1Q Median 3Q Max

-16.4387 -2.8093 -0.2672 3.0143 11.3030

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 30.14293 3.66576 8.223 1.02e-15 ***

attend -0.47663 0.13773 -3.461 0.000573 ***

priGPA -2.21470 1.62020 -1.367 0.172101

attend:priGPA 0.20231 0.05878 3.441 0.000614 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 4.354 on 676 degrees of freedom

Multiple R-squared: 0.1491, Adjusted R-squared: 0.1454

F-statistic: 39.5 on 3 and 676 DF, p-value: < 2.2e-16our regression equation is:

\[ \hat{Y} = 30. 14 - 0.48X_1 - 2.21X_2 + 0.20 X_1 X_2 \]

Visualizing Interactions

We can visualize what interaction effects imply graphically. Interactions between continuous variables bend the regression plane:

Plot Code

Plot Code

Regression Planes Bend?

So what does it mean for a regression plane to bend or not? We can start by thinking about the regression equation without any interactions.

if we say:

\[ \hat{Y} = b_0 + b_1X_1 + b_2X_2 \] You can see that the slopes of \(b_1\) and \(b_2\) are always constant, no matter what values you plug in the equation.

This means that we believe that the relation that \(X_1\) and \(X_2\) have on \(Y\) is independent.

A regression plane is flat on the surface only when the slopes are always constant (i.e. no interaction is assumed).

Regression Planes Bend Indeed!

Instead, if we say:

\[ \hat{Y} = b_0 + b_1X_1 + b_2X_2 + b_3X_1 X_2 \] You can see that the slopes of \(X_1\) and \(X_2\) will respectively change depending on what values you give to \(X_1\) or \(X_2\). Let’s say that \(X_2 = 2\). Then:

\(\hat{Y} = b_0 + b_1X_1 + 2b_2 + 2b_3X_1\)

↓

\(\hat{Y} = (b_0 + 2b_2) + (b_1 + 2b_3)X_1\)

Plot Code

What about the Regression coefficients?

\[ \hat{Y} = 30. 14 - 0.48X_1 - 2.21X_2 + 0.20 X_1 X_2 \]

Mean Centering

Turns out that we can transform our variables such that we can decide the meaning of \(0\)! Transforming our dependent variables such that \(0\) represents the mean is generally a good choice.

We can use the scale() function to center our predictors:

# the scale() function is a bit strange as previously mentioned, so we need to add the [,1] (see what happens to the column name if you don't)

# `scale = FALSE` tells the fucntion to not standardize the variable (i.e., do not make the standard deviation 1)

grade_fin$attend_cnt <- scale(grade_fin$attend, scale = FALSE)[,1]

grade_fin$priGPA_cnt <- scale(grade_fin$priGPA, scale = FALSE)[,1]Centering changes the means of the variables to 0, but does not change the standard deviations:

Mean Centering Graphically

Changing the mean of a variable simply means shifting it along the x-axis. For example, if we plot the density of the uncentered and centered attend variable:

- The red distribution is the original uncentered variable.

- the blue distribution is the mean centered variable.

Linear Transformations

Centering and standardizing are known as linear transformations. The “linear” comes from the fact that all these transformations do is shift all the data along a line. You can see that from the graph on the left. These transformations have no effect on the results of statistical analyses, but can make interpretation easier.

Uncentered and Centered Results

Call:

lm(formula = final ~ attend * priGPA, data = grade_fin)

Residuals:

Min 1Q Median 3Q Max

-16.4387 -2.8093 -0.2672 3.0143 11.3030

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 30.14293 3.66576 8.223 1.02e-15 ***

attend -0.47663 0.13773 -3.461 0.000573 ***

priGPA -2.21470 1.62020 -1.367 0.172101

attend:priGPA 0.20231 0.05878 3.441 0.000614 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 4.354 on 676 degrees of freedom

Multiple R-squared: 0.1491, Adjusted R-squared: 0.1454

F-statistic: 39.5 on 3 and 676 DF, p-value: < 2.2e-16

Call:

lm(formula = final ~ attend_cnt * priGPA_cnt, data = grade_fin)

Residuals:

Min 1Q Median 3Q Max

-16.4387 -2.8093 -0.2672 3.0143 11.3030

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 25.63475 0.18284 140.202 < 2e-16 ***

attend_cnt 0.04669 0.03863 1.209 0.227255

priGPA_cnt 3.07500 0.34254 8.977 < 2e-16 ***

attend_cnt:priGPA_cnt 0.20231 0.05878 3.441 0.000614 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 4.354 on 676 degrees of freedom

Multiple R-squared: 0.1491, Adjusted R-squared: 0.1454

F-statistic: 39.5 on 3 and 676 DF, p-value: < 2.2e-16What Changed?

We can see that the value of the interaction term remains the same, but the values of the intercept and the slopes for \(X_1\) and \(X_2\) change:

\[ \hat{Y} = 30. 14 - 0.48X_1 - 2.21X_2 + 0.20 X_1 X_2 \]

\[ \hat{Y} = 25. 63 + 0.47X_1 + 3.08X_2 + 0.20 X_1 X_2 \]

In the centered result, where \(0\) represents the mean of \(X_1\) and \(X_2\), both slopes are positive, and indicate that as GPA prior to taking the class and as attendance increases, the final grade…increases!

Nothing changed about our analysis, but in the centered solution, we are looking at the slopes at a different point of the regression plane. This point is not out of range, so it makes sense to choose it as a reference point.

Regression Coefficients Flipping When Centering? 🤔

The regression coefficients flipping signs is reflected by the direction of the plane flipping. Look for that on the plot on the next slide. You should see that the plane changes direction as some point as the values of the predictors change.

Graphical comparison

The Interpretation of The Interaction Term

So far we have discussed why we should not interpret slopes in isolation, but what does the interaction effect, \(0.20\), mean in our case?

so, if our equation is \(\hat{Y} = 25. 63 + 0.47X_1 + 3.08X_2 + 0.20 X_1 X_2\)

For a prior GPA of \(X_2 = 3.5\) we get:

\(\hat{Y} = 25.63 + 0.47X_1 + 3.08 \times 3.5 + 0.20 X_1\times 3.5\)

\(\hat{Y} = (25.63 + 10.78) + 0.47X_1 + 0.7X_1\)

\(\hat{Y} = 36.41 + 1.17X_1\)

So, when someone starts the class with a prior GPA of \(3.5\), the relation between attendance and final grade strengthens: the slope of \(X_1\) goes from \(0.47\) to \(1.17\).

Sounds Familiar?

If you remember, the interpretation of the quadratic term in Lab 8 is suspiciously similar to an interaction effect 🧐 Could it be that the quadratic term is also an interaction effect? Give it some thought 🤔

Probing Interactions: Simple Slopes

On the previous slide, we plugged in some value for \(X_2\) and looked at the resulting value of the slope for \(X_1\). The resulting \(1.17\) value for the slope of \(X_1\) is generally referred to as a simple slope.

Value of priGPA_cnt Est. S.E. 2.5% 97.5% t val. p

1 -0.545 -0.064 0.036 -0.135 0.008 -1.742 0.082

2 0.000 0.047 0.039 -0.029 0.123 1.209 0.227

3 0.545 0.157 0.061 0.037 0.276 2.577 0.010The simple slopes are under the Est. column. Any questions about the other columns?

priGPA is 1 SD above the mean is the slope of attend_cnt positive and significant. That suggests that attendance helps with the final grade only if you prior GPA is 1 SD above average.

Plotting Simple Slopes

Plotting Simple Slopes

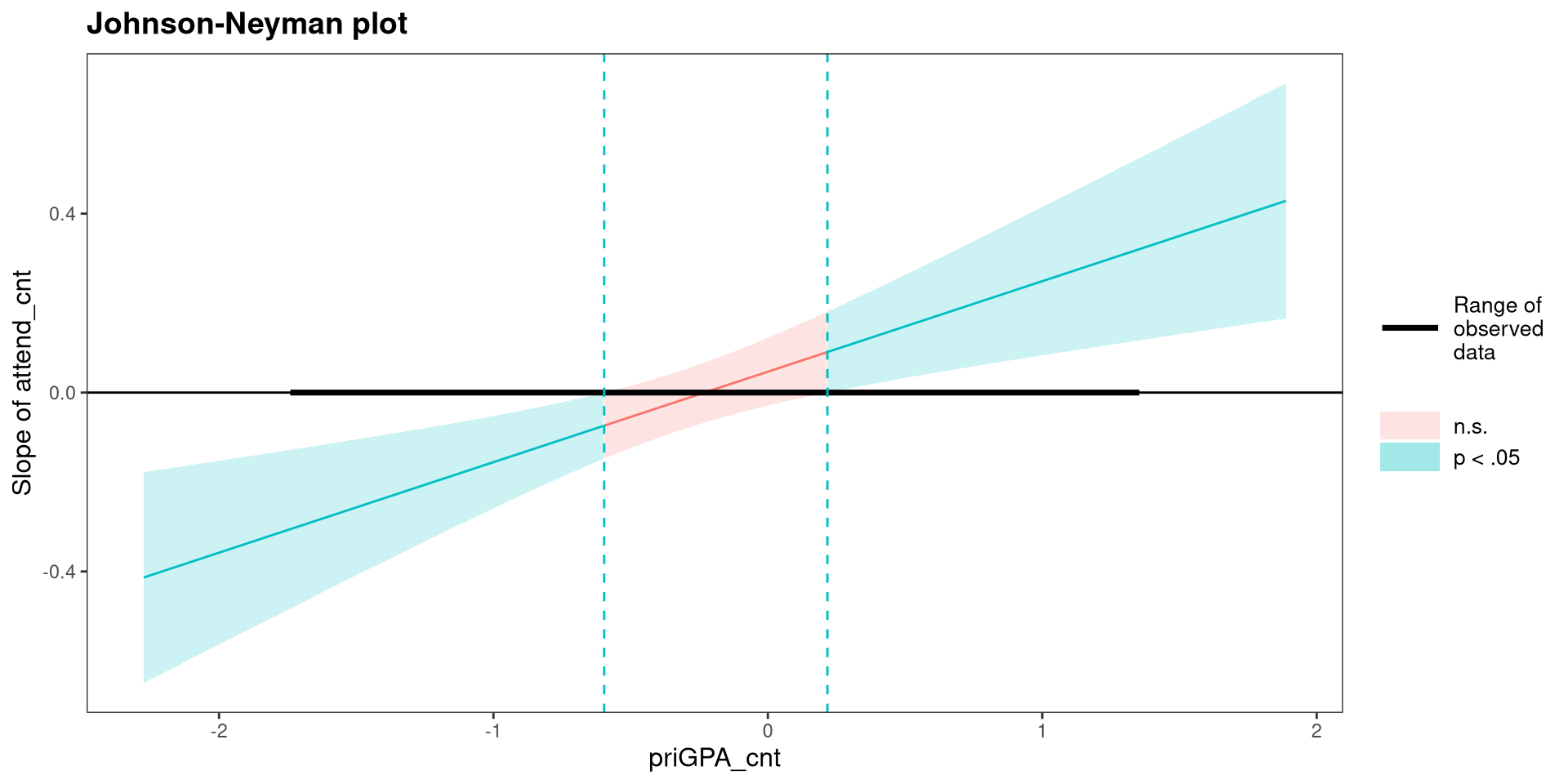

The johnson-neyman Plot 😱

- x-axis: the value of the moderator.

- y-axis: the value of the slope of the focal predictor (make sure this makes sense to you!)

References

PSYC 7804 - Lab 9: Interactions between continuous variables